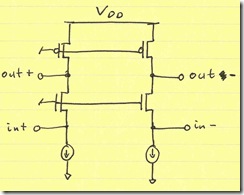

Consider the circuit below:

Let’s say that you’ve designed the circuit with a supply voltage (VDD) of 2.4 V. It’s performing very well. You meet exactly the specified linearity and noise requirements, and are within the desired current limits. The power dissipated across the PMOS and NMOS devices which dictate SNR are:

PMOS: IDP×VDSATP = 10 mA×0.6 V

NMOS: IDN×VDSATN = 10 mA×1.2 V

You have 0.6 V of headroom at the output for signal swing.

The differential input impedance is 2/gm of the NMOS transistors:

Rin = 2/gmn = VDSATN / IDN = 1.2 V / 10 mA = 120 Ω

where we have the used the relation gm = 2×ID/VDSAT which excludes short-channel effects. Including short-channel effects changes the relationship, but won’t change the conclusion of this topic.

You need a balun anyway, so you have a transformer that steps up from 50 Ω to 120 Ω

Great. The product ships and it sells well.

Your boss/customer comes by and asks you for a next generation part. They want to simplify the power regulation scheme on their products, so they absolutely have to have a 1.2 V supply. There’s no negotiation on this supply voltage.

So, you start deciding on how to scale this design to maintain SNR. You may want to move to a different process node, but I’ll exclude the effects of doing so; you’ll find that they won’t change the result. You decide that since your supply voltage has gone down by 1/2, you should scale all VDSAT’s by 1/2. You realize, though, that in order to maintain SNR, you then need to double the current:

PMOS: IDP×VDSATP = 20 mA×0.3 V

NMOS: IDN×VDSATN = 20 mA×0.6 V

You have 0.3 V of headroom at the output for signal swing.

Essentially, you are maintaining power by doubling current and halving voltage. That’s okay for your customer: he/she just wants a lower supply, not less power. The input impedance is now 30 Ω because Rin = VDSATN / IDN = 0.6 V / 20 mA, but more fundamentally, the circuit expects half the input voltage and twice the input current.

You now need a transformer that steps down from 50 Ω to 30 Ω.

I haven’t seen this relationship between supply voltage and RF input impedance published anywhere else. Post a comment I’ve missed it. I have only shown it for the common-gate amplifier. However, it is my hunch (just based on the fundamentals) that the same conclusion will be reached for other RF circuits (inductor-degenerated LNA, Gilbert cells, etc).

The relationship leads me to believe that as CMOS scales to lower and lower voltages, RF designers will start designing for lower and lower impedance. Alternatively, RF designers may find the optimum supply voltage for a 50-Ω input impedance and stick with it. That’s possibly why many RF transceivers are at 2.4 V. The curious thing about that voltage is that most RF front-ends I’ve come across operating at 2.4 V have a step-up transformer to 200 Ω.

If you like this post, consider subscribing (via RSS feed-reader or by email).